Profile

|

Publications

Visual landmark recognition from Internet photo collections: A large-scale evaluation

In this paper, we present an object-centric, fixeddimensional 3D shape representation for robust matching of partially observed object shapes, which is an important component for object categorization from 3D data. A main problem when working with RGB-D data from stereo, Kinect, or laser sensors is that the 3D information is typically quite noisy. For that reason, we accumulate shape information over time and register it in a common reference frame. Matching the resulting shapes requires a strategy for dealing with partial observations. We therefore investigate several distance functions and kernels that implement different such strategies and compare their matching performance in quantitative experiments. We show that the resulting representation achieves good results for a large variety of vision tasks, such as multi-class classification, person orientation estimation, and articulated body pose estimation, where robust 3D shape matching is essential.

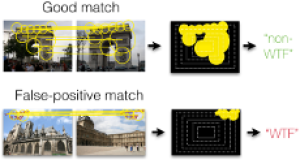

Fixing WTFs: Detecting Image Matches caused by Watermarks, Timestamps, and Frames in Internet Photos

An increasing number of photos in Internet photo collections comes with watermarks, timestamps, or frames (in the following called WTFs) embedded in the image content. In image retrieval, such WTFs often cause false-positive matches. In image clustering, these false-positive matches can cause clusters of different buildings to be joined into one. This harms applications like landmark recognition or large-scale structure-from-motion, which rely on clean building clusters. We propose a simple, but highly effective detector for such false-positive matches. Given a matching image pair with an estimated homography, we first determine similar regions in both images. Exploiting the fact that WTFs typically appear near the border, we build a spatial histogram of the similar regions and apply a binary classifier to decide whether the match is due to a WTF. Based on a large-scale dataset of WTFs we collected from Internet photo collections, we show that our approach is general enough to recognize a large variety of watermarks, timestamps, and frames, and that it is efficient enough for largescale applications. In addition, we show that our method fixes the problems that WTFs cause in image clustering applications. The source code is publicly available and easy to integrate into existing retrieval and clustering systems.

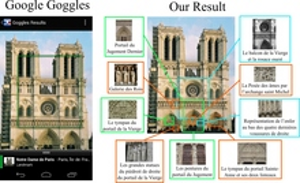

Discovering Details and Scene Structure with Hierarchical Iconoid Shift

Current landmark recognition engines are typically aimed at recognizing building-scale landmarks, but miss interesting details like portals, statues or windows. This is because they use a flat clustering that summarizes all photos of a building facade in one cluster. We propose Hierarchical Iconoid Shift, a novel landmark clustering algorithm capable of discovering such details. Instead of just a collection of clusters, the output of HIS is a set of dendrograms describing the detail hierarchy of a landmark. HIS is based on the novel Hierarchical Medoid Shift clustering algorithm that performs a continuous mode search over the complete scale space. HMS is completely parameter-free, has the same complexity as Medoid Shift and is easy to parallelize. We evaluate HIS on 800k images of 34 landmarks and show that it can extract an often surprising amount of detail and structure that can be applied, e.g., to provide a mobile user with more detailed information on a landmark or even to extend the landmark’s Wikipedia article.

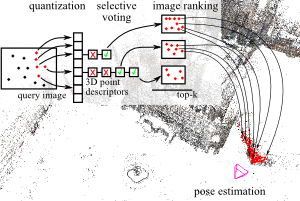

Image Retrieval for Image-Based Localization Revisited

To reliably determine the camera pose of an image relative to a 3D point cloud of a scene, correspondences between 2D features and 3D points are needed. Recent work has demonstrated that directly matching the features against the points outperforms methods that take an intermediate image retrieval step in terms of the number of images that can be localized successfully. Yet, direct matching is inherently less scalable than retrieval-based approaches. In this paper, we therefore analyze the algorithmic factors that cause the performance gap and identify false positive votes as the main source of the gap. Based on a detailed experimental evaluation, we show that retrieval methods using a selective voting scheme are able to outperform state-of-the-art direct matching methods. We explore how both selective voting and correspondence computation can be accelerated by using a Hamming embedding of feature descriptors. Furthermore, we introduce a new dataset with challenging query images for the evaluation of image-based localization.

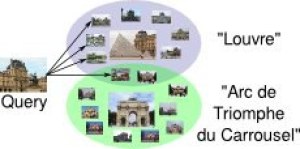

Discovering Favorite Views of Popular Places with Iconoid Shift

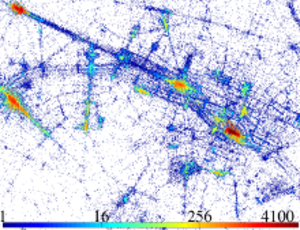

In this paper, we propose a novel algorithm for automatic landmark building discovery in large, unstructured image collections. In contrast to other approaches which aim at a hard clustering, we regard the task as a mode estimation problem. Our algorithm searches for local attractors in the image distribution that have a maximal mutual homography overlap with the images in their neighborhood. Those attractors correspond to central, iconic views of single objects or buildings, which we efficiently extract using a medoid shift search with a novel distance measure. We propose efficient algorithms for performing this search. Most importantly, our approach performs only an efficient local exploration of the matching graph that makes it applicable for large-scale analysis of photo collections. We show experimental results validating our approach on a dataset of 500k images of the inner city of Paris.

@inproceedings{DBLP:conf/iccv/WeyandL11,

author = {Tobias Weyand and

Bastian Leibe},

title = {Discovering favorite views of popular places with iconoid shift},

booktitle = {{IEEE} International Conference on Computer Vision, {ICCV} 2011, Barcelona,

Spain, November 6-13, 2011},

pages = {1132--1139},

year = {2011},

crossref = {DBLP:conf/iccv/2011},

url = {http://dx.doi.org/10.1109/ICCV.2011.6126361},

doi = {10.1109/ICCV.2011.6126361},

timestamp = {Thu, 19 Jan 2012 18:05:15 +0100},

biburl = {http://dblp.uni-trier.de/rec/bib/conf/iccv/WeyandL11},

bibsource = {dblp computer science bibliography, http://dblp.org}

}

An Evaluation of Two Automatic Landmark Building Discovery Algorithms for City Reconstruction

An important part of large-scale city reconstruction systems is an im- age clustering algorithm that divides a set of images into groups that should cover only one building each. Those groups then serve as input for structure from mo- tion systems. A variety of approaches for this mining step have been proposed recently, but there is a lack of comparative evaluations and realistic benchmarks. In this work, we want to fill this gap by comparing two state-of-the-art landmark mining algorithms: spectral clustering and min-hash. Furthermore, we introduce a new large-scale dataset for the evaluation of landmark mining algorithms con- sisting of 500k images from the inner city of Paris. We evaluate both algorithms on the well-known Oxford dataset and our Paris dataset and give a detailed com- parison of the clustering quality and computation time of the algorithms.

@incollection{weyand2010evaluation,

title={An evaluation of two automatic landmark building discovery algorithms for city reconstruction},

author={Weyand, Tobias and Hosang, Jan and Leibe, Bastian},

booktitle={ECCV Workshop},

pages={310--323},

year={2010},

}

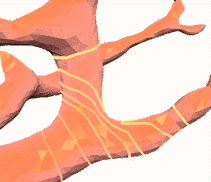

Snakes on triangle meshes

In this work we introduce a new method for representing and evolving snakes that are constrained to lie on a prescribed surface (triangle mesh). The new representation allows to automatically adapt the snake resolution to the surface tesselation and does not need any (unstable) back-projection operations. Furthermore, it enables efficient and robust collision detection and gives us complete control on the topological behaviour of the snakes, i.e. snakes may split or merge depending on the intended task. Possible applications include enhanced mesh scissoring operations and the detection of constrictions of a surface.