Continuous Adaptation for Interactive Object Segmentation by Learning from Corrections

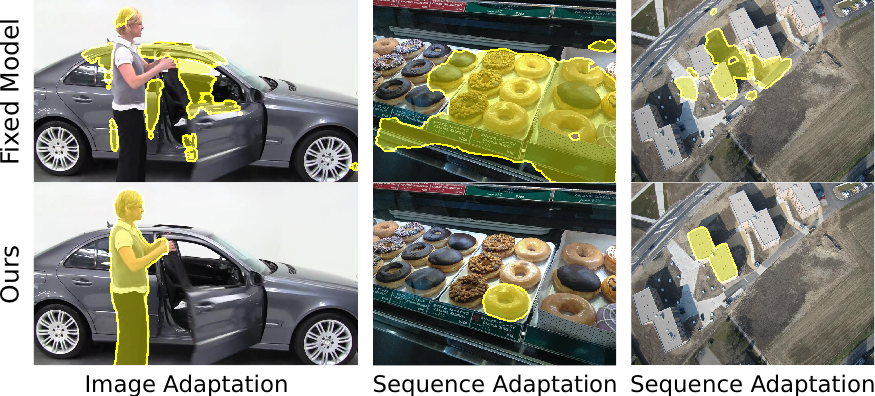

In interactive object segmentation a user collaborates with a computer vision model to segment an object. Recent works employ convolutional neural networks for this task: Given an image and a set of corrections made by the user as input, they output a segmentation mask. These approaches achieve strong performance by training on large datasets but they keep the model parameters unchanged at test time. Instead, we recognize that user corrections can serve as sparse training examples and we propose a method that capitalizes on that idea to update the model parameters on-the-fly to the data at hand. Our approach enables the adaptation to a particular object and its background, to distributions shifts in a test set, to specific object classes, and even to large domain changes, where the imaging modality changes between training and testing. We perform extensive experiments on 8 diverse datasets and show: Compared to a model with frozen parameters, our method reduces the required corrections (i) by 9%-30% when distribution shifts are small between training and testing; (ii) by 12%-44% when specializing to a specific class; (iii) and by 60% and 77% when we completely change domain between training and testing.

@inproceedings{Kontogianni20ECCV,

title={Continuous Adaptation for Interactive Object Segmentation by Learning from Corrections},

author={ Kontogianni, Theodora and Gygli, Michael and Uijlings, Jasper and Ferrari, Vittorio},

booktitle=ECCV,

year={2020}

}