Multi-Aspect Detection of Articulated Objects

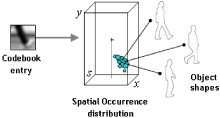

A wide range of methods have been proposed to detect and recognize objects. However, effective and efficient multi- viewpoint detection of objects is still in its infancy, since most current approaches can only handle single viewpoints or as- pects. This paper proposes a general approach for multi- aspect detection of objects. As the running example for de- tection we use pedestrians, which add another difficulty to the problem, namely human body articulations. Global ap- pearance changes caused by different articulations and view- points of pedestrians are handled in a unified manner by a generalization of the Implicit Shape Model [5]. An important property of this new approach is to share local appearance across different articulations and viewpoints, therefore re- quiring relatively few training samples. The effectiveness of the approach is shown and compared to previous approaches on two datasets containing pedestrians with different articu- lations and from multiple viewpoints.