Computer Vision-Based Gesture Tracking, Object Tracking, and 3D Reconstruction for Augmented Desks

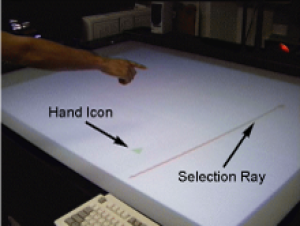

The Perceptive Workbench endeavors to create a spontaneous and unimpeded interface between the physical and virtual worlds. Its vision-based methods for interaction constitute an alternative to wired input devices and tethered tracking. Objects are recognized and tracked when placed on the display surface. By using multiple infrared light sources, the object’s 3D shape can be captured and inserted into the virtual interface. This ability permits spontaneity since either preloaded objects or those objects selected at run-time by the user can become physical icons. Integrated into the same vision-based interface is the ability to identify 3D hand position, pointing direction, and sweeping arm gestures. Such gestures can enhance selection, manipulation, and navigation tasks. The Perceptive Workbench has been used for a variety of applications, including augmented reality gaming and terrain navigation. This paper focuses on the techniques used in implementing the Perceptive Workbench and the system’s performance.

@article{starner2003perceptive,

title={{Computer Vision-Based Gesture Tracking, Object Tracking, and 3D Reconstruction for Augmented Desks}},

author={{Starner, Thad and Leibe, Bastian and Minnen, David and Westyn, Tracy and Hurst, Amy and Weeks, Justin}},

journal={{Machine Vision and Applications}},

volume={14},

number={1},

pages={59--71},

year={2003},

publisher={Springer}

}